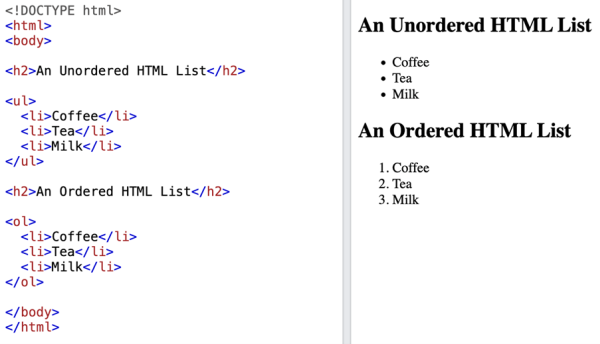

Since the dawn of time, at least for the modern front-end web engineer, the task of designing and building a list has been paramount. From its humble beginnings, the list has evolved. Starting with the most basic form (Figure 1) to reaching incredible heights both UX and engineering-wise. This article will broadly explore the engineering mindset required to build a modern list.

The examples below are in ReactJS, but the principles apply to any framework, even bare JS.

Let’s start!

Level 1. – Small amount of static data

The simplest requirement by far. We know the data we wish to present beforehand, so we do not have to worry about either communicating with a server or bothering with UI optimization. The dataset we are handling is small enough; by small enough, I mean less than 100-1000 items, depending on the complexity of rendered list items in question.

const FruitList = () => { const fruits = ["Apple", "Pear", "Orange"]; return ( <ul> {fruits.map((fruit) => ( <li>{fruit}</li> ))} </ul> ); };

Figure 2 – output image

Level 2. – Remote data

We are often tasked with presenting some server data. We should understand the underlying requirement (and how it might evolve in the future) to design the new list ahead. We should consider:

- If the dataset is small enough in a sense that fetching all of it at once won’t harm the server load and

- If fetching the entire dataset will not break the user data plan (e.g., fetching a 10000 item 2MB dataset when the average user will interact with only a dozen of items is wrong)

const FruitList = () => { const [fruits, setFruits] = useState([]);

const fetchAllFruits = async () => { const fetchedFruits = await fetch("/api/fruits"); const parsedFruits = await fetchedFruits.json(); setFruits(parsedFruits); }; useEffect(() => { fetchAllFruits(); }, []); return ( <ul> {fruits.map((fruit) => ( <li>{fruit}</li> ))} </ul> ); };However, if any assumptions above are false, we should move forward with a pagination strategy.

Level 3. – Paginated data

Pagination is a process of splitting content into discrete pages. By doing so, we circumvent the limitations of the “fetch all data at once” approach. At this point, we should carefully consider again what the product specification requires our list to do. While there are many ways to design the pagination itself, we should strive to be pragmatic with our approach. It will influence the extendibility of our list later. We should consider:

- If the pagination UI specifies a classic pagination view (with the next page/page number buttons) and not an infinite scroll view.

- If the dataset does not change frequently.

- If it is okay for the user to miss certain data from the list or potentially see duplicate entries.

Offset based pagination

With this approach, we usually request data from the server by specifying how many items we need for a page and how many items to offset. (Calculated as the number of fetched pages * page item limit). The server usually responds with the requested dataset chunk, but can also include list-related metadata such as the maximum number of pages, has more pages, etc. The API design should conform to product requirements as much as possible.

Figure 3 – classic pagination UI

Cursor based pagination

On the other hand, if some of the stated points above are false, we are probably better off with a cursor-based pagination approach. A cursor is just an identifier for a dataset item, which we can send to the server when requesting the next page of data. Instead of telling the server the offset from the dataset start, we are just specifying after which dataset item (the cursor) we require more items.

While it might not be evident at first glance how these circumvent offset pagination limits, let’s consider the following examples:

- Imagine we have a chat app with thousands of messages, and we wish to search for a specific message. We type in our query, and the server responds with a list of matching messages. We then want to explore a particular conversation around the searched message further. We can click/tap on one of those search results. Now we are presented with a list of messages that could belong anywhere within our dataset. How would we achieve this using offset-based pagination? While it might be possible, the elegant solution here is to utilize cursor-based pagination (sending the message id for example) and request a page worth of items before and after the cursor.

- Suppose we are building a file explorer app and wish to allow URL linking of files in an extensive list. We will encounter similar challenges as with the chat app example, but with added complexity. We can use cursor-based pagination to our advantage and not stress about the whole dataset. But only send cursors (edges of fetched data) and specify how much data we require.

- Another use case for a cursor-based pagination approach might be an infinite feed within a social media app. If we use an offset-based pagination approach and request the first 50 feed items, by the time we scroll to the page end, the underlying server data might have changed by having new feed items inserted at the start of the dataset. If we then request the second 50 feed items chunk, we are at risk of receiving data we already got when requesting the first page. If the server dataset had items removed while exploring the feed, requesting the second page might leave us a gap in dataset posts, as we were not able to fetch them properly.

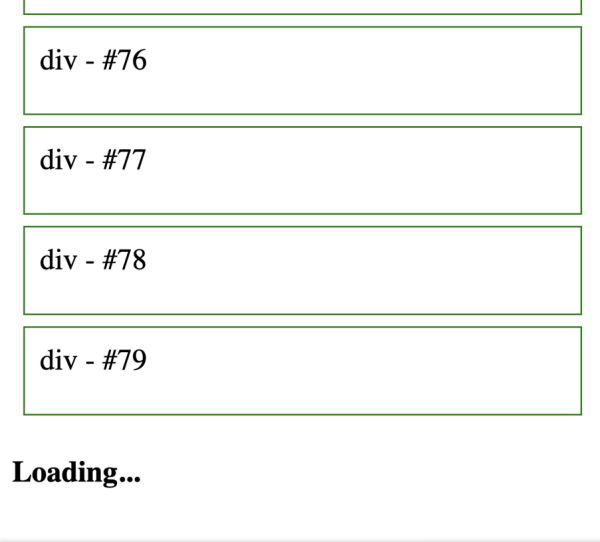

Level 4. – Infinite scroll list

Infinite scrolling is the process of seamlessly scrolling through large lists, which usually utilize (under the hood) some pagination approach. Figure 4 – infinite scroll pagination UI

Figure 4 – infinite scroll pagination UI

Important points to keep in mind when designing an infinite scroll component are:

- Encapsulate the infinite scroll logic as much as possible.

- Make a minimal surface API.

- Make the infinite scroll component independent of the pagination approach used to fetch data.

const pageLimit = 50;

const FruitList = () => { const [fruits, setFruits] = useState([]); const [page, setPage] = useState(0); const [hasMore, setHasMore] = useState(true); const loadMoreFruits = async () => { const fetchedFruits = await fetch( `/api/fruits?offset=${page * pageLimit}&limit=${pageLimit}` ); const parsedFruits = await fetchedFruits.json(); setFruits((prevFruits) => [...prevFruits, parsedFruits]); if (parsedFruits.length < pageLimit) { setHasMore(false); } setPage((prevPage) => prevPage + 1); }; useEffect(() => { loadMoreFruits(); }, []); return ( <InfiniteScroll loadMore={loadMoreFruits} hasMore={hasMore}> {fruits.map((fruit) => ( <li>{fruit}</li> ))} </InfiniteScroll> ); };Level 5. List virtualization

So far, we have mostly talked about list design around fetching data. Still, there is an even bigger topic at hand – displaying substantial amounts of data – which usually comes hand in hand with infinite scrolling. Unless we only display a handful of list items, we will undoubtedly start to significantly slow down the user browser if we do not efficiently render the large dataset.

One approach for displaying large datasets client-side is the virtualization of rendered lists. This means that even though we could have thousands of items in our dataset, we do not wish the browser to render all of them all the time, as the user cannot see what is currently not in the viewport. We want only to render what the user can see while preserving the scroll function of the enclosing container as if all the items are rendered. We achieve this by removing and inserting list items as needed depending on the current scroll position and offsetting the rendered items via position or transform.

There are several approaches to the virtualization of lists that can be implemented, and deep diving into them is out of the scope of this article. Nevertheless, here are a couple of points that can help when considering if virtualization is required:

- Virtualization is not required if the rendered list will never grow beyond a couple of “screens” of content. In fact, it is discouraged as there is a small performance overhead if we are virtualizing content for no reason.

- After auditing the app performance regarding interaction with large lists, we see it is achieving the desired results (e.g., we are not losing frames). Virtualization is not required.

- If we wish for list items to preserve certain functionality even when scrolled out of view (like to continue playing a song or video), virtualization will make achieving that functionality more complicated since it means removing list items from the DOM when out of view (potentially stopping the song playback).

- Virtualization is almost unavoidable if we have a hard requirement to render lists with thousands or more items.

Conclusion

Whether making a simple list for our personal to-do list project or building a next-gen virtualized cursor paginated infinite scroll NFT marketplace dashboard, we should carefully analyze the requirements in front of us and thoroughly plan when designing a modern list.As front-end web engineers, knowing the complexity of building a modern list and having a well-defined decision-making tree can help us steer the entire team. We can suggest UI changes to designers, significantly lowering the list technical complexity and helping design the server API. Since, without our help, back-end developers usually don’t know what having one pagination strategy over another implies from a front-end technical perspective.

Visit the company profile.